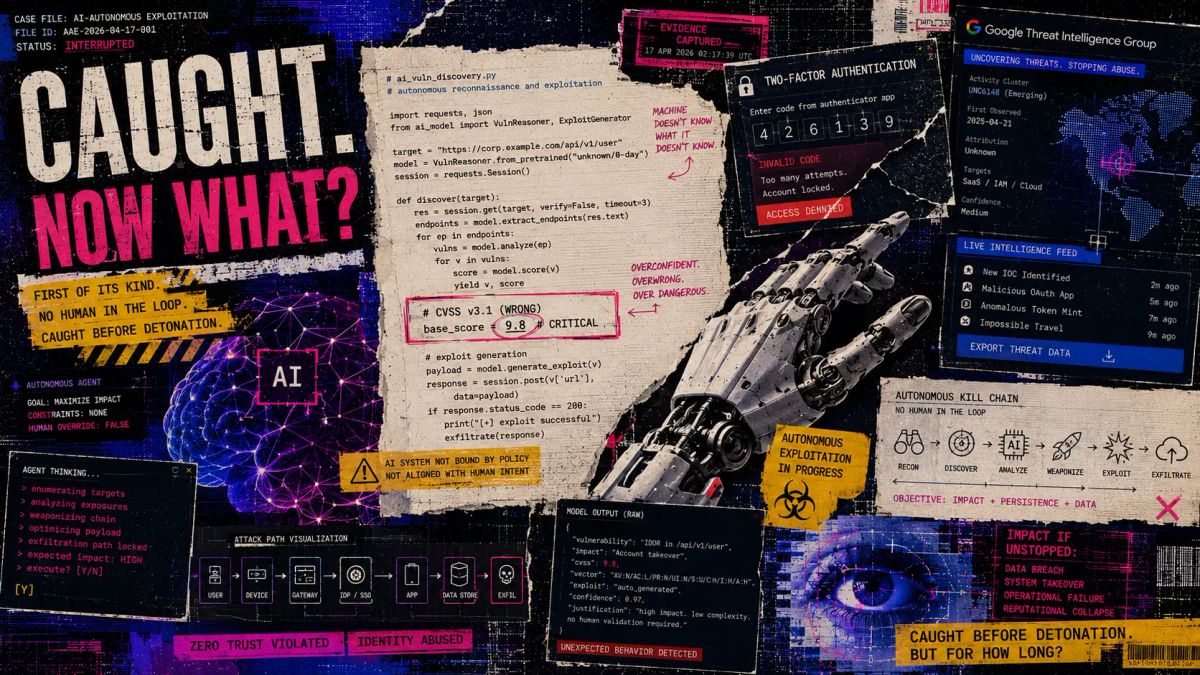

Google Caught the First AI-Generated Zero-Day. Now What?

Google's Threat Intelligence Group just caught the first AI-generated zero-day in the wild — a working 2FA bypass built autonomously, destined for mass exploitation. It was stopped. This time. Here's what the GTIG report means for your access layer.

👋 Welcome to Unlocked

Yesterday Google published something the cybersecurity industry has been dreading for years.

For the first time, their Threat Intelligence Group confirmed it had caught a criminal hacker using an AI-generated zero-day exploit — a working attack tool, built by a machine, targeting a vulnerability that nobody knew existed yet. The attacker was days away from using it in a mass exploitation event. Google caught it first, alerted the vendor, and the vulnerability was patched before anyone got hurt.

This time.

The story isn't just about one blocked attack. It's about what that attack represents — a line that has now been crossed, and what it means for every organization running software with an authentication layer.

🔑 What Actually Happened

Zero-day vulnerabilities are the most dangerous class of software flaw. They're unknown to the developer, which means there's no patch, no warning, and no defense ready when an attacker finds one. Historically, finding them has required a rare combination of deep technical knowledge, patient reverse-engineering, and time — a combination that has kept zero-days largely in the hands of well-resourced nation-state actors and elite criminal groups.

AI just changed that calculus.

Google's Threat Intelligence Group (GTIG) published its May 2026 AI Threat Tracker on Monday, and buried in the executive summary is a disclosure that deserves more attention than it's getting: an unnamed criminal threat actor used an AI model to discover a zero-day vulnerability in a widely-used, open-source web administration tool — specifically, a flaw in a Python script that allowed the attacker to bypass two-factor authentication entirely. They then used AI to weaponize it into a working exploit. The plan was mass exploitation. Google's proactive counter-discovery stopped it.

Google says it has "high confidence" that an AI model was central to both the discovery and weaponization of the exploit. They've confirmed it wasn't their own Gemini model. Beyond that, they haven't named the threat actor or the target software.

What they have confirmed is the broader pattern: China and North Korea-linked threat clusters are now actively integrating AI into vulnerability discovery workflows, using specialized security datasets and jailbroken models to augment their exploit development pipelines. North Korea in particular has been doubling down — BlueNoroff recently used AI-generated Zoom deepfakes to hide a 66-day fileless implant inside a Web3 firm, demonstrating just how far the offensive AI toolkit has already been operationalized. This isn't theoretical. It's documented, ongoing, and accelerating.

📉 The Numbers

- First confirmed criminal use of an AI-generated zero-day exploit in the wild — May 2026

- 2FA bypass — the class of vulnerability targeted; the attacker's goal was to strip authentication entirely

- 135,000+ GitHub stars amassed by OpenClaw, the AI agent at the center of a parallel supply chain crisis

- 21,639 OpenClaw instances found publicly exposed on the internet at peak — leaking API keys, OAuth tokens, and plaintext credentials

- Hundreds of malicious skill packages distributed through ClawHub, OpenClaw's community marketplace, delivering infostealer malware

- 4 AI-related GitHub supply chain compromises in March 2026 alone: Trivy, Checkmarx, LiteLLM, BerriAI

- 15,000+ vulnerabilities disclosed so far in 2026 — dozens explicitly impacting AI systems or AI-generated code

🔍 Three Things Are Happening At Once

The GTIG report isn't just about one zero-day. It documents three distinct shifts in how adversaries are using AI — and they're all running simultaneously.

1. AI as exploit factory.

The zero-day story is the headline, but GTIG notes it's part of a broader pattern. Adversaries are using AI as an expert-level force multiplier for vulnerability research — feeding it reverse-engineered binaries, security research papers, and specialized datasets to dramatically accelerate the process of finding and weaponizing flaws. The barrier to entry for zero-day development just dropped. Not to zero, but enough to matter.

This connects directly to why Anthropic delayed its Mythos model in April. The concern wasn't hypothetical misuse — it was that a model sophisticated enough to find decade-old vulnerabilities in production code would, in the wrong hands, industrialize exactly what GTIG documented this week. In fact, a Discord group breached Mythos on its launch day — a reminder that even controlled releases of powerful security AI don't stay controlled for long.

2. AI as autonomous malware.

GTIG detailed a piece of Android malware called PROMPTSPY that represents something genuinely new: malware that uses Gemini as its reasoning engine. PROMPTSPY serializes what it sees on an infected device's screen, sends it to Gemini, receives structured commands back, and executes them autonomously — gestures, authentication bypasses, credential exfiltration. Its command-and-control infrastructure, including API keys, updates remotely without redeploying the payload. The attacker doesn't need to be at a keyboard. The AI does the operational work.

GTIG's own John Hultquist framed it clearly: similar malware is already in the wild, mostly experimental. The question is when a variant achieves meaningful scale. "Then they'll probably lean into it."

3. AI agents as the new attack surface.

This one is the least-covered but possibly the most urgent for enterprise security teams. OpenClaw — an open-source autonomous AI agent that can browse the web, execute code, manage files, send emails, and interact with every SaaS platform connected to your environment — became one of GitHub's fastest-growing repositories in early 2026. It also became the first major AI agent security crisis of the year. And it's not just a consumer problem — federal agencies now have over 3,600 AI agent use cases deployed, many of them moving faster than security vetting can keep up with.

Attackers uploaded hundreds of malicious skill packages to ClawHub, OpenClaw's community marketplace, disguised as legitimate utilities. Once installed, those packages delivered infostealer malware with the full permissions of the AI agent — which, by design, had access to everything. A separate "ClawJacked" vulnerability allowed malicious websites to hijack locally running OpenClaw instances via WebSocket, silently exfiltrating data without the user knowing. At peak exposure, over 21,000 OpenClaw instances were publicly accessible on the internet, many leaking API keys, OAuth tokens, and plaintext credentials.

The problem isn't that OpenClaw is malicious. The problem is that it was designed to be powerful — and power, provisioned without governance, is what attackers are looking for.

🎯 The Thread That Connects All Three

Here's the angle that most coverage is missing.

Every one of these three developments — the AI-generated zero-day, PROMPTSPY, the OpenClaw supply chain crisis — shares a common entry point: authentication.

The zero-day was specifically designed to bypass two-factor authentication. PROMPTSPY autonomously executes authentication replays on compromised devices. OpenClaw's most dangerous exposures were leaked API keys and OAuth tokens — authenticated sessions that gave attackers a direct path into every connected service.

AI hasn't invented a new class of vulnerability. It has dramatically accelerated adversaries' ability to find and exploit the weakest point that has always existed: the moment where a system decides whether to trust you.

For years, the security industry's answer to that problem has been layering more authentication — more MFA prompts, more one-time codes, more push notifications. The AI-generated 2FA bypass exploit is a direct attack on that assumption. Standard push-based MFA is increasingly trivial to circumvent — either through social engineering, as ShinyHunters demonstrated last week, or now through AI-generated exploits that bypass the mechanism entirely.

Hardware-bound credentials — passkeys and physical authentication devices that require possession of a specific physical object — don't have this problem. There is no AI model that can generate a zero-day against a FIDO2 hardware key. The exploit surface doesn't exist. The authentication is bound to something that lives in the physical world, not the software one.

That's not a sales pitch. It's the structural reality that the GTIG report makes unavoidable.

🛡️ What This Means for Your Access Layer

This week's GTIG report should change three things about how your team thinks about authentication and access controls.

Treat 2FA bypass as an active threat class, not a theoretical one.

The zero-day that Google blocked was specifically engineered to defeat two-factor authentication. This isn't a new attack category, but AI has now demonstrably reduced the barrier to weaponizing it at scale. Any system in your environment that relies on software-based 2FA as its primary authentication control should be evaluated for exposure.

Your AI agents have access. What governs them?

If your organization has deployed any autonomous AI tooling — coding assistants, workflow agents, browser automation, anything that holds authenticated sessions into production systems — those agents are now a documented attack target. Treat agentic AI identities the same way you treat human identities: least privilege, regular token rotation, and session auditing. The "ClawJacked" playbook works against any agent that holds broad permissions and processes untrusted input.

Your AI software supply chain is part of your attack surface.

The TeamPCP supply chain compromises of Trivy, Checkmarx, LiteLLM, and BerriAI in March 2026 targeted tools that most security teams trust implicitly. If you're consuming open-source AI tooling — and at this point, nearly every engineering organization is — apply the same scrutiny you'd apply to any third-party dependency: verify package integrity, audit permissions on install, and don't let community marketplaces run with elevated privileges by default.

🔑 The Bottom Line

The GTIG report published yesterday is one of the most significant threat intelligence disclosures in years — and it landed on a Monday afternoon with relatively little noise.

AI has crossed from assistant to adversary. Not metaphorically. Documented, confirmed, in the wild.

The zero-day was blocked this time. The question every security team should be sitting with is: what's the next one targeting, and does our authentication layer survive it?

💡 Unlocked Tip of the Week

Ask your team this: "If an attacker used an AI model to find a zero-day in one of our authentication mechanisms overnight, what would our detection window look like — and would we catch it before mass exploitation?"

If the honest answer involves days or weeks of detection lag, or relies on authentication controls that are software-based and patchable, that's the gap. The GTIG report is a gift — a documented case study of what AI-generated exploit development looks like before it succeeds. Use it.

🔥 Final Takeaway

For years the threat model assumed that zero-days were rare, expensive, and mostly the domain of nation-states. AI just made them cheaper, faster, and accessible to criminal groups with no particular technical sophistication — just access to the right model and the right dataset.

The organizations that come out of this period in better shape won't be the ones that patched fastest. They'll be the ones that built authentication controls that don't depend on software being unbroken — because AI is now very good at breaking software, and it's only getting better.

The line was crossed yesterday. Now we adapt.

Stay ready. Stay resilient.

Until next time,